What this is about (with context and in not too technical terms)

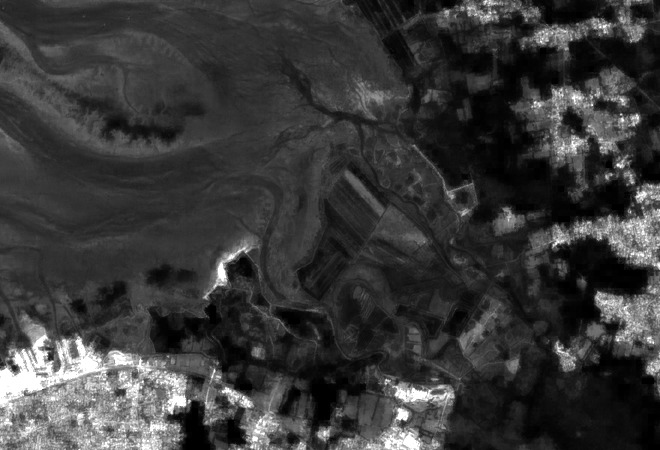

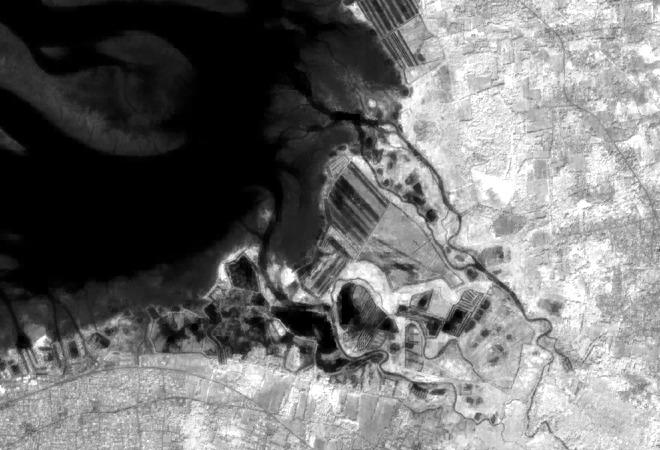

This is a method for improving satellite images. These images are provided with a resolution (i.e. pixel size on the ground) that results from technical compromises. Imagine taking a picture with a camera at night. You have two possibilities: either waiting to make enough light come in (long exposure), or trigger the sensor with not enough light (high ISO value). The first choice results in blurry images for objects in motion, while the other results in snow-like noise. Satellites move along their orbit, so they can hardly wait a long time. And poor quality, noisy images are no option either. What some satellite makers do is to take the light from all colors at the same time, and produce black and white images. More light is taken compared to filtering each color separately. But this is not an option when the goal is to survey, for example, how green vegetation evolves in an area, compared against bare brown soil. In that case, precise colors are required. But, the more filtering is performed to measure a very specific color, and the less light that remains. In that case, a common choice is to reduce the image resolution. If, instead of measuring light coming from square pixels 10×10 meters wide, it is accumulated on pixels of size 20×20 meters, then there is 4 times more light per pixel. This is unfortunate as details are now less visible, but at least we can trust the measurements, since we keep the snow-like noise under control. So, very often, satellite images are provided with pixel sizes that depend on the color that is measured (e.g. red, green, blue, infrared, ...). This is the case for the Sentinel-2 satellites, which present both cases: less precise colors at high pixel resolutions and more precise colors at lower pixel resolutions.

The method which is presented in the article indicates how to get the details from pixels that have the highest resolution, and propagate these details to all other colors. As a result, we get an image where all colors (i.e. "spectral bands") have the best resolution.

Abstract and article

This resolution enhancement method is designed for multispectral and multiresolution images, such as these provided by the Sentinel-2 satellites (but not only). Starting from the highest resolution bands, band-dependent information (reflectance) is separated from information that is common to all bands (geometry of scene elements). This model is then applied to unmix low-resolution bands, preserving their reflectance, while propagating band-independent information to preserve the sub-pixel details.

The article was published in the TGRS journal. A preprint version of the article is available here as well as on ArXiv.org. The preprint version is actually more detailed, due to space restriction of the editor, and thus the recommended reference to read.

Software, with application to Sentinel-2

NEW: Download and try the super-resolution plugin for ESA's SNAP application, version 5 or more recent. It works for Linux, Win64 and Win32. In order to install it, select the "tools/plugins" menu, then the "downloaded" tabs and select the file you just downloaded. Install it, then restart SNAP (mandatory). The source code is provided here.

The method is quite computationally intensive. Please use a small test region of interest first, and only then expand according to your needs / available computing power. The graph processing framework is supported. You can also use the batch version using Python and GDAL for use on clusters where SNAP cannot be installed (see below).

The super-resolution algorithm is written in C++ and it is usable directly as a C++ library. It is also wrapped as a Python module, and also accessible through a Java JNI interface (used by the above plugin).

The code (including the Python script for super-resolving Sentinel-2 images) was only tested under Linux (Debian testing/unstable, g++). The Win32/64 DLLs used by the above plugin were cross-compiled from Linux. I you port this to other compiler/operating systems, please mail me. I may then link your port here or include it in a future release (with due credits). You can download the latest version here.

This super-resolution algorithm is released as Free/Libre software under the LGPL v2.1 or more recent or, your choice, under the Free/Libre CeCILL-C licence. The source code is maintained in my source repository, check it for updates.